A few weeks ago Meta released a large language model to researchers, warts and all. There are small steps to open these kinds of neural network up for widespread study. Large language models and image-making AIs have the potential to be world-changing technologies, but only if their toxicity is tamed. When Google’s in-house ethics team raised problems with the large language models, in 2020 it sparked a fight that ended with two of its leading researchers being fired. They are creating new marvels, but also new horrors- and moving on with a shrug. But these firms are pushing the boundaries of what AI can do and their work shapes the kind of AI that all of us live with. That’s fine if these were simply proprietary tools. OpenAI is making DALL-E 2 available only to a handful of trusted users Google has no plans to release Imagen.

#HIDE WORDS IN A PICTURE GENERATOR HOW TO#

In short, these firms know that their models are capable of producing awful content, and they have no idea how to fix that.įor now, the solution is to keep them caged up.

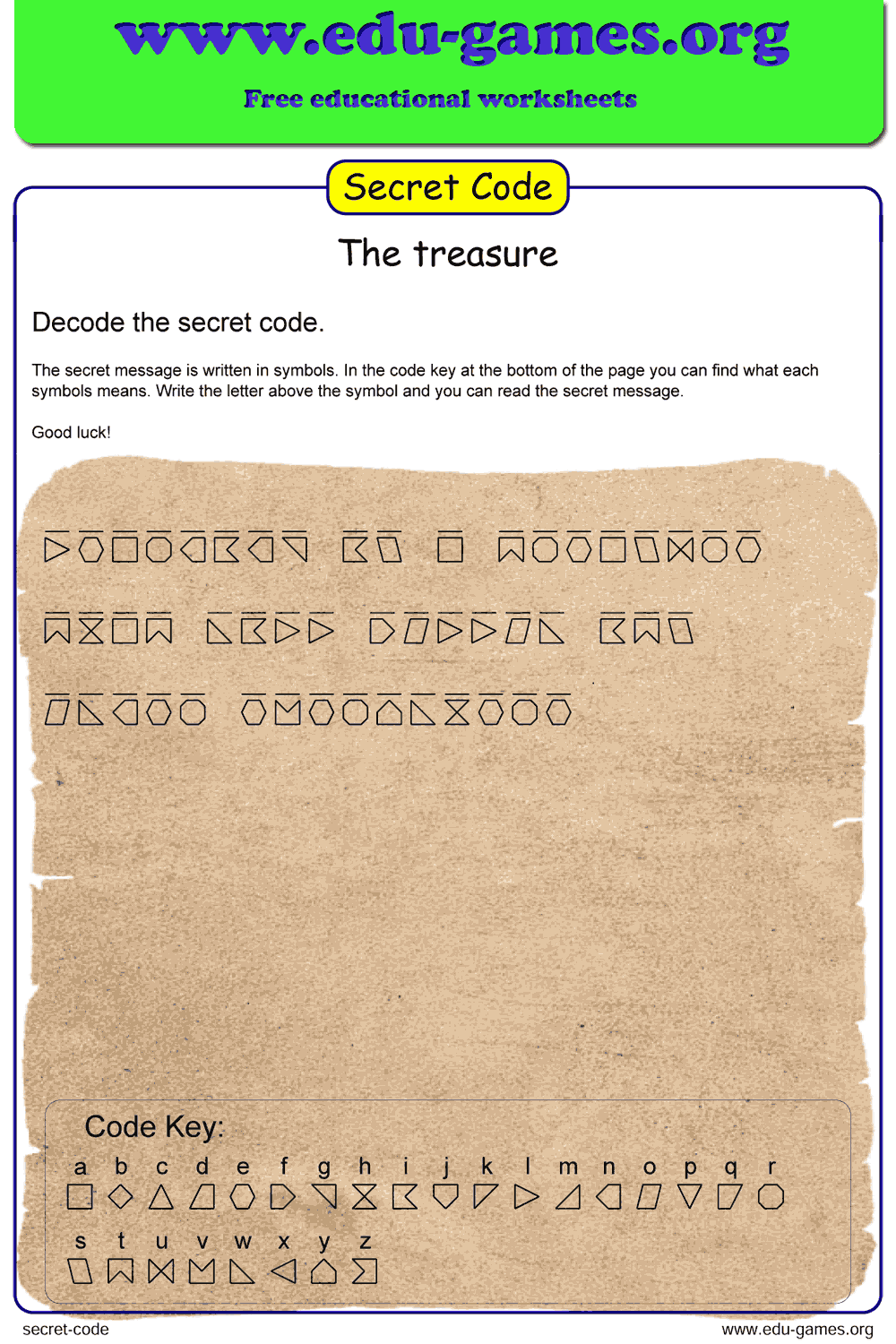

It's the same kind of acknowledgement that OpenAI made when it revealed GPT-3 in 2019: “internet-trained models have internet-scale biases.” And as Mike Cook, who researches AI creativity at Queen Mary University of London, has pointed out, it’s in the ethics statements that accompanied Google’s large language model PaLM and OpenAI’s DALL-E 2. As such, there is a risk that Imagen has encoded harmful stereotypes and representations, which guides our decision to not release Imagen for public use without further safeguards in place.” Imagen relies on text encoders trained on uncurated web-scale data, and thus inherits the social biases and limitations of large language models. However, you can also upload your own templates or start from scratch with empty templates. Finally, well write the ugliest nested loop ever to actually encode the picture.

#HIDE WORDS IN A PICTURE GENERATOR GENERATOR#

Then well make a numpy array of that image, create a generator object for the secret message, and calculate the GCD of the image. Were going to pass it the image location and secret message. People often use the generator to customize established memes, such as those found in Imgflips collection of Meme Templates. Ok, so lets write the actual image encoding function. Scroll down the Imagen website-past the dragon fruit wearing a karate belt and the small cactus wearing a hat and sunglasses-to the section on societal impact and you get this: “While a subset of our training data was filtered to removed noise and undesirable content, such as pornographic imagery and toxic language, we also utilized LAION-400M dataset which is known to contain a wide range of inappropriate content including pornographic imagery, racist slurs, and harmful social stereotypes. Its a free online image maker that lets you add custom resizable text, images, and much more to templates. OpenAI and Google explicitly acknowledge this. It’s no secret that large models, such as DALL-E 2 and Imagen, trained on vast numbers of documents and images taken from the web, absorb the worst aspects of that data as well as the best.